Let be honest with you. A few years ago, most of us thought of AI as something that lived in research labs or science fiction films. Then, almost overnight, it was in our inboxes, in our hospitals, on our roads. What changed was not just computing power. What changed were the machine learning models underneath all of it.

So here is the real question people should be asking. Not whether AI matters, because that ship has sailed. The question now is: which models are actually doing the work, and why do some of them behave so differently from others?

We have put together this guide to cut through the noise. Whether you are a developer choosing a model for your next project, a business owner trying to understand what your AI vendor is actually selling you, or just someone who wants to understand the technology shaping their world, this breakdown will give you a grounded, practical view of what is powering modern AI today.

The AI & ML Landscape: Key Stats to Know

Before we get into the models themselves, it helps to have a sense of the scale we are talking about. These numbers still catch us off guard every time we look at them:

| 88% | AI adoption in at least one business function | Up from 78% year-over-year (McKinsey) |

| 40% | Annual growth rate of autonomous AI agent market | From $8.6B in 2025 to $263B by 2035 (Research Nester) |

| 2x | Enterprise AI spending vs. 2023 levels | Forecasted to double by 2026 |

| 92% | Companies planning to increase AI investment in 3 years | Phaedra Solutions / Salesforce |

That last one stood out to me. 92% of companies planning to increase AI investment is not a niche trend. At this point, it is nearly universal. And the models driving that investment are exactly what we are about to cover.

1. Transformers: The Model Behind the AI Revolution

If you have used ChatGPT, Google Search, or GitHub Copilot in the last two years, you have already seen a Transformer in action. You just did not know it. Introduced in a 2017 paper called “Attention Is All You Need,” the Transformer architecture completely upended how we thought about processing language.

Before Transformers, most language models read text sequentially, one word at a time, which meant they struggled to connect ideas that were far apart in a sentence. Transformers solved this through a mechanism called self-attention, which lets the model look at an entire sentence at once and figure out which words are most relevant to each other, regardless of where they sit in the text.

The practical effect of that shift was enormous. Because Transformers process sequences in parallel rather than one step at a time, they could be trained much faster, and scaled to billions of parameters in ways that simply were not possible before.

Why Transformers Changed Everything

Here is a simple example that makes the self-attention idea click. Take the sentence: “The animal did not cross the street because it was too wide.” What does “it” refer to? The street, not the animal. That seems obvious to a human reader, but figuring it out requires holding the broader context in mind while reading. Transformers do exactly this. They attend to the whole sentence simultaneously rather than losing context as they go.

That capability, while it sounds simple, turns out to be the foundation of almost every major AI capability we now take for granted.

Where Transformers Are Used

- Large Language Models (LLMs) like GPT-5, Claude, and Gemini

- Code generation tools like GitHub Copilot

- Machine translation (Google Translate)

- Document summarization and legal review tools

- Multimodal AI systems that process text, images, and audio together

Real-World Impact: By 2026, every major LLM, including GPT-5, Gemini 2.5 Pro, Claude 4, Llama 4, Mistral Large, and Qwen 3, is built on Transformer architecture. It is not just a model type anymore. It is the skeleton that modern AI is built around.

2. Large Language Models (LLMs): Language AI at Scale

Think of Large Language Models as Transformers that have been turned up to an almost incomprehensible scale. GPT-3, which felt like a breakthrough when it launched, had 175 billion parameters. The models competing for top spots today operate with Mixture-of-Experts architectures, which means they can deploy far greater effective capacity without needing proportionally more compute for every query.

What surprises most people about LLMs is how much more they do than autocomplete text. Feed one a complex legal contract and it will summarize the risk clauses. Ask it to write and debug code in three different languages and it will do that too. In agentic setups, they can plan and execute multi-step tasks with minimal human oversight, which is why the enterprise world has become so dependent on them so quickly.

The Leading LLMs in 2026

|

ML Model |

Type | Primary Use Case |

Top Example |

| GPT-5 (OpenAI) | Proprietary LLM | Reasoning, coding, creative work | ChatGPT, Copilot |

| Gemini 2.5 Pro (Google) | Multimodal MoE LLM | Text, audio, image, video | Google Workspace, Search |

| Claude 4 (Anthropic) | Proprietary LLM | Analysis, long docs, safety | Claude.ai, enterprise |

| Llama 4 (Meta) | Open-weight LLM | Research, fine-tuning | Self-hosted, Hugging Face |

| DeepSeek V3/R1 | Open-weight LLM | Reasoning, cost-efficient | Open-source community |

| Qwen 3 (Alibaba) | Open-weight LLM | Multilingual, coding | Global open-source use |

Key Insight: The Race Is Now About Specialization

Something interesting happened in the LLM space over the past year. The performance gap between the top labs essentially closed. That sounds like good news, and it is, but it also changes how you should think about model selection. Picking the biggest model is no longer the obvious move. Picking the right model for your specific use case is.

A 2026 Amplitude survey found that 58% of users have already replaced traditional search with generative AI tools, and 71% said they want AI integrated directly into their shopping experiences. That kind of user behavior shift does not reverse.

For businesses looking to build LLM-powered products, partnering with a specialized LLM development company can significantly compress the gap between prototype and production-ready deployment.

3. Convolutional Neural Networks (CNNs): The Eyes of AI

If LLMs are the brain of modern AI, Convolutional Neural Networks are the eyes. CNNs were specifically designed to process grid-structured data, and images are the most obvious example. Rather than looking at each pixel in isolation, a CNN runs filters across the image, each one learning to detect something different, starting with simple edges and textures, then building up to complex shapes and eventually entire objects.

The clever bit is weight sharing. The same filter gets applied across the entire image, which massively reduces the number of parameters needed compared to older fully-connected architectures. That efficiency is a big part of why CNNs have held up as a workhorse even as newer models emerged.

Where CNNs Power AI Today

- Medical imaging: detecting tumors, reading X-rays, analyzing pathology slides

- Autonomous vehicles: identifying pedestrians, road signs, and lane markings

- Quality control in manufacturing: spotting product defects in real time

- Facial recognition and security systems

- Satellite imagery analysis for agriculture and urban planning

Stat: By 2026, an estimated 80% of initial healthcare diagnoses will involve some form of AI analysis, up from 40% of routine diagnostic imaging in 2024. CNNs sit at the center of that shift.

Vision Transformers have been gaining ground on CNNs in benchmark competitions, and they will probably continue to do so. But in real deployed systems, CNNs still dominate. Years of optimization, a well-understood behavior profile, and lower inference costs keep them firmly in the mix.

4. Recurrent Neural Networks (RNNs) and LSTMs: AI with Memory

Here is a good way to think about Recurrent Neural Networks. Imagine reading a novel, but every time you turn to a new page, you forget everything that came before it. That is basically the problem RNNs were designed to solve. They process sequential data by passing a hidden state forward through each step, carrying a kind of memory of what came before to inform what comes next.

Standard RNNs had a well-known flaw, though. The further back in a sequence you went, the more that information degraded. It is called the vanishing gradient problem, and it made RNNs unreliable for long sequences. Long Short-Term Memory networks, or LSTMs, tackled this head-on in 1997 by adding gating mechanisms that decide what information to retain, what to discard, and what to pass forward at each step. It was a genuinely elegant solution.

Where RNNs and LSTMs Are Used

- Time-series forecasting: financial markets, demand prediction, energy consumption

- Speech recognition systems

- Music generation and audio synthesis

- Anomaly detection in network traffic and sensor data

- Legacy natural language processing pipelines

Transformers have taken over most NLP tasks, and fairly so. But LSTMs still have a real edge in time-series work, particularly where compute efficiency matters. A research comparison on ecological forecasting found that LSTMs often beat Transformers when working with shorter input windows, which makes them a smarter, leaner choice in a lot of enterprise data pipelines.

5. Generative Adversarial Networks (GANs): The Creative Engine

Ian Goodfellow came up with the GAN idea in 2014, apparently during a conversation at a bar. Whether that story is apocryphal or not, the concept is genuinely clever. Two neural networks compete against each other: a Generator that tries to create convincing synthetic content, and a Discriminator that tries to tell the fakes from the real thing. Each gets better by trying to beat the other. Eventually, the Generator gets so good that it produces outputs almost indistinguishable from reality.

That adversarial dynamic produced some remarkable results, from photorealistic faces of people who do not exist to synthetic medical scans that can train diagnostic models without involving real patient data.

Real-World Applications of GANs

- Image synthesis: generating photorealistic faces, products, and scenes

- Data augmentation: creating training data for other ML models

- Medical imaging: generating synthetic patient scans to train diagnostic models

- Style transfer in creative tools

- Deepfake detection (ironically, GANs power both creation and detection)

Security Concern: The FBI flagged a significant rise in AI-powered scams in 2024, including GAN-generated phishing content and deepfake videos impersonating executives. That dual-use problem, the same tool creating and detecting fakes, has made GAN research increasingly intertwined with the AI security field.

6. Diffusion Models: The State of the Art in Image Generation

If you have used Midjourney, DALL-E, or Stable Diffusion recently, you have used a diffusion model. The idea is almost counterintuitive at first. You take a real image and gradually destroy it by adding noise, step by step, until it looks like pure static. Then you train the model to reverse that process, learning to reconstruct something coherent from noise. At inference time, you start with random noise and let the model denoise it into whatever you asked for.

This approach largely replaced GANs at the top end of image generation because it sidesteps one of GANs’ chronic problems: mode collapse. GANs can get stuck producing variations of the same outputs. Diffusion models tend to produce higher fidelity results with much greater variety.

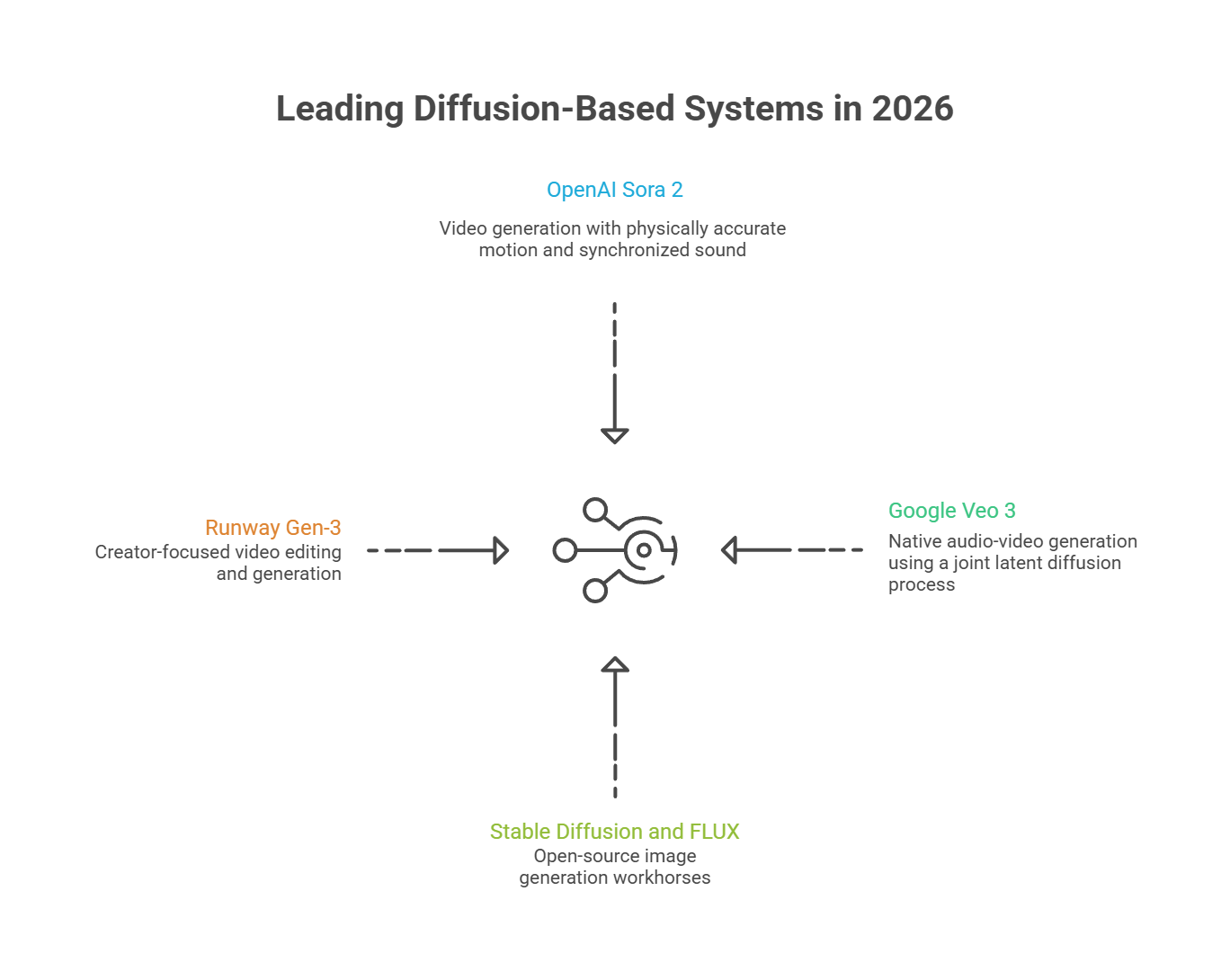

Leading Diffusion-Based Systems in 2026

- OpenAI Sora 2: video generation with physically accurate motion and synchronized sound

- Google Veo 3: native audio-video generation using a joint latent diffusion process

- Stable Diffusion and FLUX: open-source image generation workhorses

- Runway Gen-3: creator-focused video editing and generation

One thing worth noting: even the best video generation models still struggle with human motion. Recent benchmarks put accuracy at around 50% for specific human-action tasks. It is an active research problem, and closing that gap is one of the more interesting contests in AI right now.

7. Reinforcement Learning (RL) Models: AI That Learns by Doing

Most machine learning models learn from labeled examples. You show them thousands of images of cats with the label “cat,” and eventually they learn what a cat looks like. Reinforcement Learning works differently. The model, called an agent, takes actions in an environment and receives rewards or penalties based on the outcome. Over millions of iterations, it figures out which sequences of decisions produce the best results without anyone explicitly telling it the rules.

This is how DeepMind’s AlphaGo shocked the world by beating the human Go world champion in 2016, a feat most experts thought was still a decade away. It is also how OpenAI trained agents to play Dota 2 at a professional level. More practically, it is the mechanism behind RLHF, Reinforcement Learning from Human Feedback, which is how models like GPT-5 and Claude get fine-tuned to be helpful and safe rather than just statistically plausible.

Where RL Is Deployed

- Robotics: training robots for warehouse automation, surgery, and navigation

- Game AI and simulation

- Recommendation systems (YouTube, Netflix, TikTok)

- Supply chain optimization and dynamic pricing

- Drug discovery: optimizing molecular designs

Breakthrough: In 2025, several RL-trained reasoning models hit gold-medal level performance at major international math competitions. Researchers had not expected that milestone to arrive until at least 2026. It is a useful reminder that these timelines keep slipping forward faster than predicted.

8. Random Forests and Gradient Boosting: The Reliable Workhorses

We want to push back slightly on a common assumption here. Not every business AI problem needs a neural network. In fact, if your data lives in a spreadsheet and your outcome is a number or a category, there is a good chance that Random Forests or Gradient Boosting will outperform, or at least match, a neural network, while being far easier to interpret and maintain.

These models do not get the press coverage of Transformers or diffusion models. But they are running quietly inside fraud detection systems, credit scoring engines, demand forecasting pipelines, and insurance claims processors at companies you use every day.

What Is a Random Forest?

A Random Forest is exactly what it sounds like: an ensemble of decision trees, each trained on a different random slice of the data. When you ask it to make a prediction, all the trees vote, and the majority wins. The beauty of this approach is that individual trees can be wrong in all sorts of different ways, but their errors tend to cancel out across the ensemble. The collective result ends up being surprisingly robust.

What Is Gradient Boosting?

Gradient Boosting takes a different approach. Instead of training trees independently and combining them, it builds them sequentially. Each new tree focuses specifically on the mistakes the previous trees made. XGBoost, LightGBM, and CatBoost are the dominant implementations, and they show up in Kaggle competition leaderboards and production ML pipelines with roughly equal frequency, which tells you something about how reliable they are.

Where These Models Excel

- Credit risk scoring and fraud detection in financial services

- Customer churn prediction and lead scoring in CRM systems

- Healthcare insurance claims processing

- E-commerce demand forecasting

- Feature engineering pipelines feeding into deep learning models

Industry practitioners consistently recommend starting with gradient boosting for structured business data before reaching for neural networks. The performance difference is often smaller than expected, and the operational simplicity is vastly greater. Organizations that work with a dedicated Machine Learning Development Company often find that selecting the right model architecture from the outset saves significant rework down the line.

9. Multimodal Models: AI That Sees, Hears, and Reads

Until recently, most AI models were specialists. One model handled images, another handled text, another handled audio. You would stitch them together in a pipeline and hope the outputs from one made sense as inputs to the next. Multimodal models throw that approach out entirely.

A multimodal model processes text, images, audio, and video in a single unified architecture, learning shared representations that let it reason across all of them at once. That is what lets you hand a model a PDF containing both written notes and embedded charts, and get back a coherent analysis that draws on both.

Why Multimodality Matters for Business

- Healthcare: AI that analyzes both patient notes and X-rays together

- Retail: combining product images with customer conversation history for personalized recommendations

- Manufacturing: visual defect detection combined with sensor log analysis

- Security: integrating access logs with video surveillance footage

- Legal: reading both scanned contracts and audio recordings for discovery

What has shifted recently is the expectation baseline. A year ago, multimodal capability was a premium differentiator. Now Google Gemini 2.5 Pro, GPT-5, and Claude 4 all treat text, images, and documents as standard inputs. If your AI system cannot handle mixed media, it is starting to look behind the curve.

10. Federated Learning and Edge AI: Private, Distributed Intelligence

Here is a problem that does not get discussed enough in AI coverage. Some of the most valuable data for training AI, medical records, financial transactions, private communications, simply cannot be centralized without creating serious legal, ethical, and security risks. Federated Learning exists specifically to address that.

Rather than pulling raw data to a central server, federated learning sends the model to the data. Each device or organization trains a local version and ships back only the model updates, never the underlying data. Those updates get aggregated into a global model that gets smarter without ever directly accessing anyone’s sensitive information.

Key Use Cases

- Healthcare: training diagnostic models across hospitals without sharing patient records

- Finance: fraud detection across bank branches without centralizing transaction data

- Smart devices: improving voice recognition on-device without uploading audio

- Legal and compliance: cross-organizational risk models that respect data sovereignty

Scale of Edge AI: Edge AI, which runs models directly on devices rather than routing everything through the cloud, is projected to power over 40% of IoT devices by 2026. Paired with federated learning, this signals a fundamental change in where AI computation actually happens. It is moving out of centralized data centers and into the objects and institutions of everyday life.

Also Read: Machine Learning vs. Traditional Programming: What’s the Difference?

Quick Reference: Which Model for Which Task?

|

Your Task |

Best Model Type |

Why |

|

Understand or generate text |

LLM / Transformer | Built for language at scale |

|

Classify or detect in images |

CNN or Vision Transformer |

Spatial feature extraction |

|

Generate images or video |

Diffusion Model |

High-quality, stable synthesis |

|

Forecast time series |

LSTM or Gradient Boosting |

Sequential memory & efficiency |

|

Tabular data / business KPIs |

Random Forest / XGBoost |

Fast, interpretable, reliable |

|

Train robots or game agents |

Reinforcement Learning |

Learns from environment rewards |

|

Work with private distributed data |

Federated Learning |

No raw data centralization |

| Process text + images + audio | Multimodal Transformer |

Unified cross-modal reasoning |

What Is Coming Next: Trends Shaping ML in 2026 and Beyond

Agentic AI: The shift from AI as a question-answering tool to AI as an autonomous task-completer is well underway. The autonomous AI agent market is projected to grow at 40% annually, reaching $263 billion by 2035. That trajectory is hard to overstate.

Mixture of Experts (MoE): Rather than activating all parameters for every query, MoE architectures intelligently route each input to relevant sub-networks. This lets models scale their effective capacity without the proportional compute costs that made earlier scaling unsustainable.

Explainable AI (XAI): The XAI market is projected to hit $4.2 billion by 2027, and the demand is being driven by regulators and risk teams, not researchers. Finance, healthcare, and insurance cannot deploy black-box models at scale. They need models that show their reasoning.

Inference-Time Scaling: The next wave of performance gains will not come from simply training bigger models. They will come from smarter inference strategies, including chain-of-thought reasoning, retrieval-augmented generation, and multi-agent coordination.

AI Governance: The AI governance market is valued at $308 million today and is forecast to surpass $1.42 billion by 2030. Seventy percent of organizations are expected to have formal governance frameworks in place by 2026. The era of deploying AI without accountability structures is ending.

Conclusion

We started this guide by saying AI is no longer a distant promise. The machine learning models we have covered here are the reason for that. Transformers and LLMs gave machines fluency with language. CNNs gave them the ability to see. Diffusion models unlocked creative generation at a quality level that still feels a bit surreal. RL taught systems to improve through experience. Gradient boosting and Random Forests kept enterprise data science reliable and interpretable. Federated learning made it possible to train on sensitive data without centralizing it.

None of these models is universally best. Each one is a tool built for a different kind of problem. The teams that get the most out of AI are not the ones chasing the newest or largest model. They are the ones who understand what each model is good at and deploy accordingly. Understanding which model does what, and why, is no longer optional knowledge for technical professionals. As AI adoption climbs to 88% of major organizations and autonomous agents move from assistants to employees, knowing how these systems work is the literacy of the modern era. Companies seeking to act on this knowledge are increasingly turning to providers of Software development services in USA to build and deploy custom ML solutions that align with their specific business objectives.

The age of AI experimentation is over. The age of deployment is here.

Frequently Asked Questions (FAQs)

1. What is the difference between a machine learning model and an AI model?

The terms get used interchangeably a lot, but there is a meaningful distinction. Machine learning is a specific method for building AI systems, one where the system learns patterns from data rather than following hand-coded rules. An AI model is a broader term that could include rule-based systems, expert systems, or ML-based models. In practice, when people say “AI model” today, they almost always mean an ML model, specifically a neural network of some kind. But not all AI is ML, and keeping that distinction in mind helps when evaluating vendor claims.

2. Do I need a large language model for every AI use case?

Definitely not, and this is one of the most common and costly mistakes in AI adoption. LLMs are extraordinary at tasks involving language: summarization, classification, generation, translation, and reasoning over text. But if your problem is fraud detection on tabular transaction data, XGBoost will likely outperform an LLM and cost a fraction of the compute. If your problem is image classification, a well-tuned CNN or Vision Transformer is the right tool. LLMs are genuinely transformative, but treating them as the answer to every problem is a recipe for overengineering and overspending.

3. How do businesses typically get started with deploying machine learning models?

The most practical starting point is usually a clearly defined, measurable problem where you already have some historical data. A specific outcome to predict or a specific content task to automate gives you something to evaluate progress against. From there, the typical path is: clean and understand your data, establish a simple baseline, experiment with progressively more complex models, and evaluate them rigorously before deploying. Many organizations choose to work with external partners early on, both to accelerate the timeline and to avoid architectural decisions that become expensive to undo later.

4. What makes a machine learning model “trustworthy” for regulated industries?

Trust in regulated contexts usually comes down to three things: explainability, auditability, and consistency. Explainability means the model can provide a comprehensible reason for a given output, not just a score. Auditability means there is a clear record of how the model was trained, on what data, and when. Consistency means the model performs reliably across demographic groups and edge cases, not just on average. This is why tree-based models like gradient boosting remain dominant in credit and insurance despite the rise of deep learning. They are far easier to explain to a regulator. Explainable AI tooling is bridging this gap for neural networks, but it remains an active challenge.

5. Is open-source ML actually viable for enterprise use, or is it just for researchers?

Open-source models have crossed a meaningful threshold in the past two years. Meta’s Llama 4, DeepSeek V3, and Alibaba’s Qwen 3 are not research toys. They are production-capable models that large organizations are running in self-hosted environments, often for reasons of cost, data privacy, or customization that proprietary APIs cannot accommodate. The trade-off is that open-source requires more internal infrastructure and expertise to deploy well. You own the model, but you also own the maintenance. For teams with strong ML engineering capacity or access to a reliable implementation partner, the economics and flexibility of open-source are increasingly compelling.

Sources: McKinsey Global AI Report | Research Nester | Amplitude AI Playbook 2026 | IEA Data Center Report | MarketsandMarkets XAI Forecast | Grand View Research | Pluralsight AI Models 2026 | Clarifai LLM Guide 2026