Computer vision projects in healthcare are actively reshaping how clinicians detect disease, interpret medical images, and act on diagnostic data. In short, they work by training algorithms on massive labeled datasets of scans, slides, and images so the system learns to flag anomalies that human eyes might miss or catch too late. The impact is measurable and growing fast.

What Is Computer Vision in Healthcare Diagnostics?

At its core, computer vision in healthcare means teaching machines to “see” medical images and extract clinically meaningful information from them. This covers a wide range of inputs: X-rays, MRIs, CT scans, pathology slides, retinal images, dermoscopy photos, and even surgical video feeds.

The technology does not replace radiologists or pathologists. What it does is handle the volume problem. A single radiologist might review 50 to 100 scans in a shift. An AI-assisted system can process thousands, flagging priority cases and filtering out routine ones. The physician then focuses cognitive energy on the hard calls, not the administrative sorting.

According to research published in medical AI journals, diagnostic errors contribute to approximately 40,000 to 80,000 deaths annually in the United States alone. Computer vision tools that improve early detection rates directly address this gap. (source)

How Do Computer Vision Projects in Healthcare Actually Work?

The pipeline starts with data. Healthcare institutions collect enormous quantities of imaging data over years of clinical operation. This data gets annotated by domain experts, essentially telling the model: this region is a tumor, this cell morphology is abnormal, this opacity is consistent with pneumonia.

The model trains on these labeled examples using deep learning architectures, particularly convolutional neural networks, which are well-suited for spatial pattern recognition. Once trained, the model can analyze new images and produce outputs ranging from binary flags to segmentation maps that outline the exact boundaries of a lesion.

Clinical deployment is where things get nuanced. A model trained on data from one hospital system may perform differently when deployed at another due to variations in imaging equipment, patient demographics, or scan protocols. This is why validation across diverse datasets matters before any system goes live.

Beyond radiology, computer vision is being used to analyze pathology slides at the cellular level, identify diabetic retinopathy from fundus photographs, detect skin cancers from dermatology images, and guide robotic surgical systems in real time.

Key Diagnostic Challenges These Systems Address

Volume and Backlog

Imaging volumes have grown faster than the radiologist workforce in most developed countries. In the United Kingdom, NHS imaging waiting lists have exceeded 1.5 million patients at various points in recent years. Computer vision tools can pre-screen images, automatically route urgent cases, and reduce the time from scan to report significantly.

Consistency Across Readings

Two radiologists reviewing the same scan do not always reach the same conclusion. Inter-reader variability is a documented problem, especially in mammography and chest imaging. Algorithmic reading introduces a consistent baseline that can be audited, updated, and validated systematically.

Early Detection at Scale

Early-stage cancers are notoriously difficult to catch. A subtle nodule in a lung CT, a tiny irregularity in a mammogram, the early signs of glaucoma in a retinal image. Human readers working under time pressure miss these. Computer vision models, when trained on early-stage positive cases, are tuned specifically to catch what is easy to overlook.

Geographic and Resource Gaps

Not every hospital has access to subspecialty radiologists. Remote or underserved regions often rely on general practitioners interpreting imaging that would ideally go to a specialist. AI-assisted diagnostic tools help bridge this gap by bringing subspecialty-level pattern recognition to settings where that expertise is unavailable in person.

Comparing Traditional Diagnostics vs. Computer Vision-Assisted Diagnostics

| Factor |

Traditional Workflow |

CV-Assisted Workflow |

| Image review speed |

Minutes to hours per scan |

Seconds to minutes |

| Consistency |

Variable across readers |

Standardized baseline |

| After-hours coverage |

Delayed or on-call only |

Continuous |

| Early detection sensitivity |

Dependent on experience |

Optimized for subtle findings |

| Scalability |

Limited by workforce |

Scales with compute |

| Cost over time |

Fixed staffing costs |

Decreasing per-unit cost |

This comparison is not meant to suggest that automation eliminates the need for clinical judgment. It shows where computer vision adds the most measurable value within an existing diagnostic workflow.

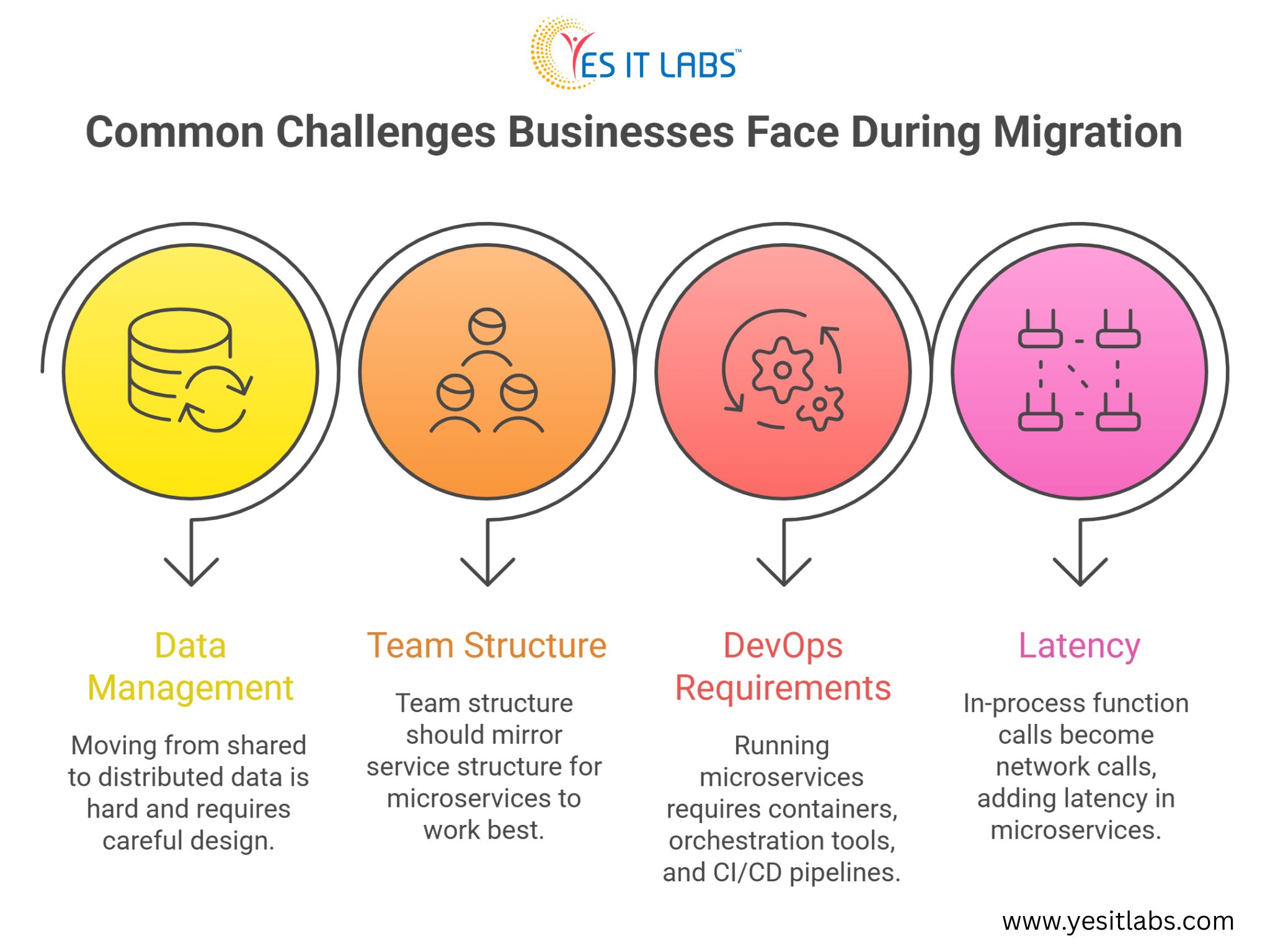

What Makes Computer Vision Project Implementation Actually Difficult in Healthcare

The technology itself is increasingly mature. The harder problems are organizational, regulatory, and technical in ways that do not get discussed as often.

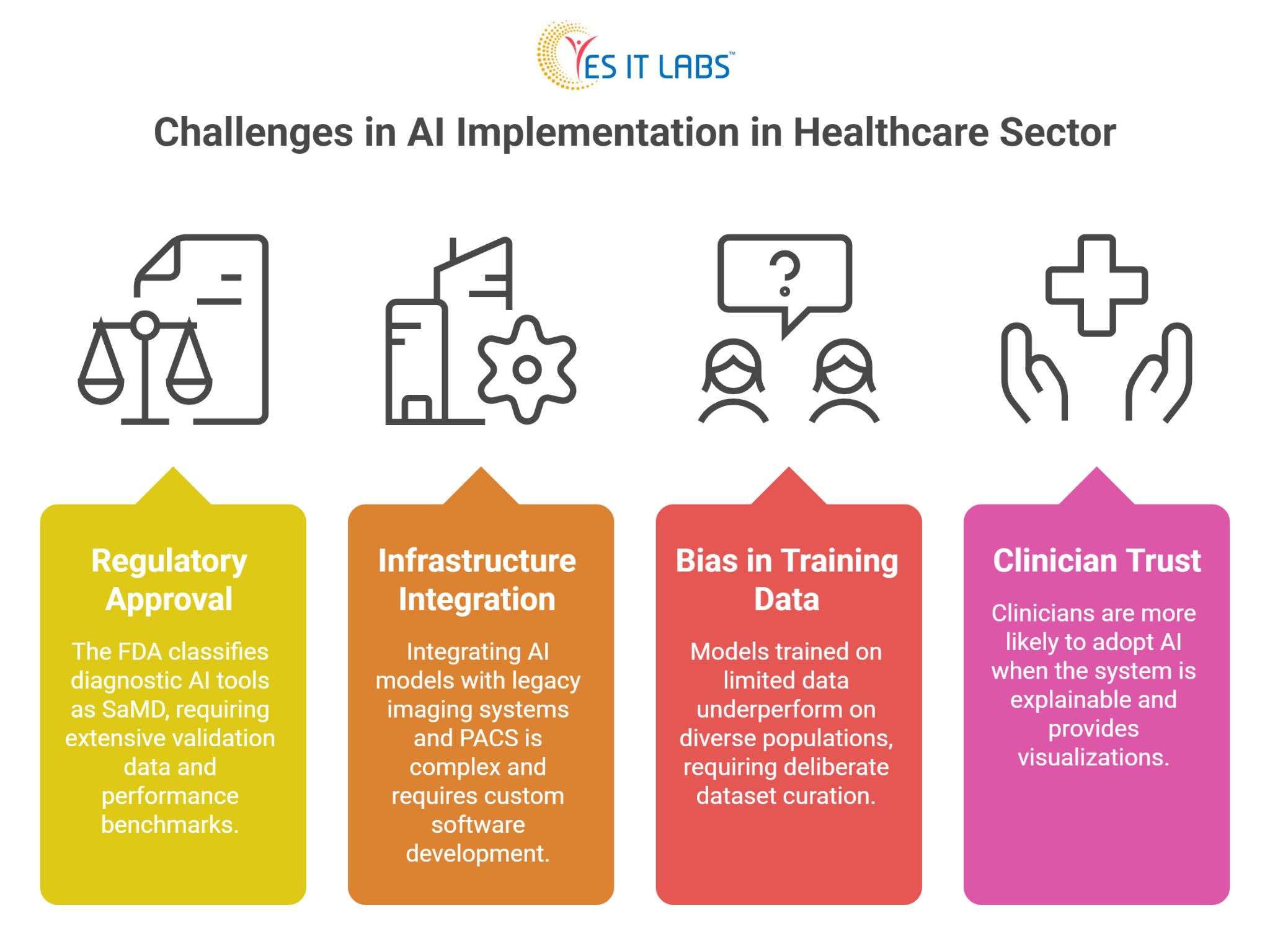

Regulatory Approval

In the United States, the FDA classifies most diagnostic AI tools as Software as a Medical Device (SaMD), which requires clearance before clinical use. The approval pathway demands extensive validation data, performance benchmarks across demographic subgroups, and sometimes a predicate device comparison. This process takes time and resources.

Integration with Existing Infrastructure

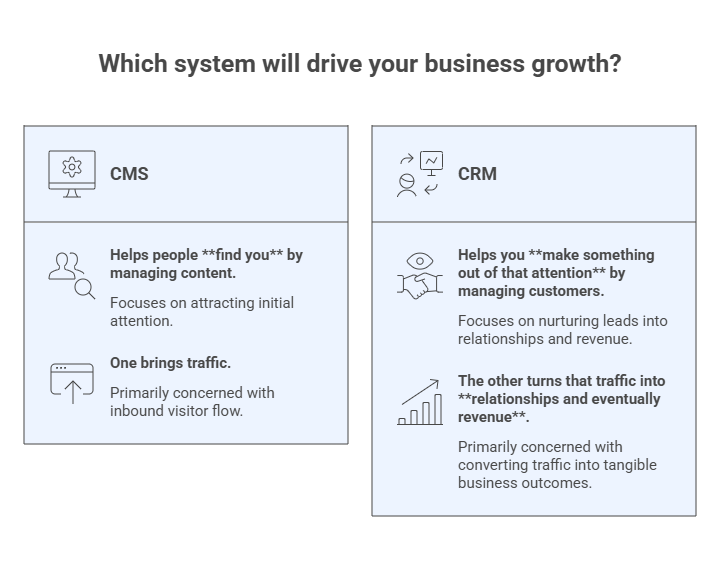

Most hospitals operate on legacy imaging systems using DICOM standards that were not designed with AI ingestion in mind. Getting a computer vision model to talk to a PACS (Picture Archiving and Communication System) and return structured output into an electronic health record is not trivial. It requires careful custom medical software development solutions that account for workflow specifics, data formats, and security requirements.

Bias in Training Data

If a model trains primarily on data from a specific patient population, it will underperform on others. Skin tone affects dermatology model accuracy. Demographic differences affect disease prevalence and presentation. Building models that perform equitably requires deliberate dataset curation, which is time-consuming and expensive.

Clinician Trust and Adoption

Even a well-performing model struggles if clinicians do not trust it. Explainability matters here. Physicians are more likely to act on an AI recommendation when the system can show, through a heatmap or similar visualization, which regions of the image influenced the output.

Practical Advice for Healthcare Organizations Exploring This Space

1. Start with a well-defined problem rather than a broad ambition. “Improve diagnostics” is too vague. “Reduce time to report for chest X-rays flagged as urgent” is actionable and measurable.

2. Invest in data governance before you invest in models. The quality and completeness of your imaging archive will determine the ceiling of what any model can achieve.

3. When building or procuring these systems, it is worth considering whether to hire dedicated full stack developers with healthcare IT experience, as integration work across imaging systems, EHRs, and clinical interfaces requires a specific combination of backend depth and regulatory awareness.

4. Pilot in a low-stakes clinical context first. Use the pilot to measure real-world performance against your validation benchmarks, gather clinician feedback, and identify edge cases before scaling.

5. Do not underestimate the change management dimension. Technical deployment is one piece. Getting radiologists, pathologists, and referring physicians to actually use the tool in their daily workflow is a separate project with its own requirements.

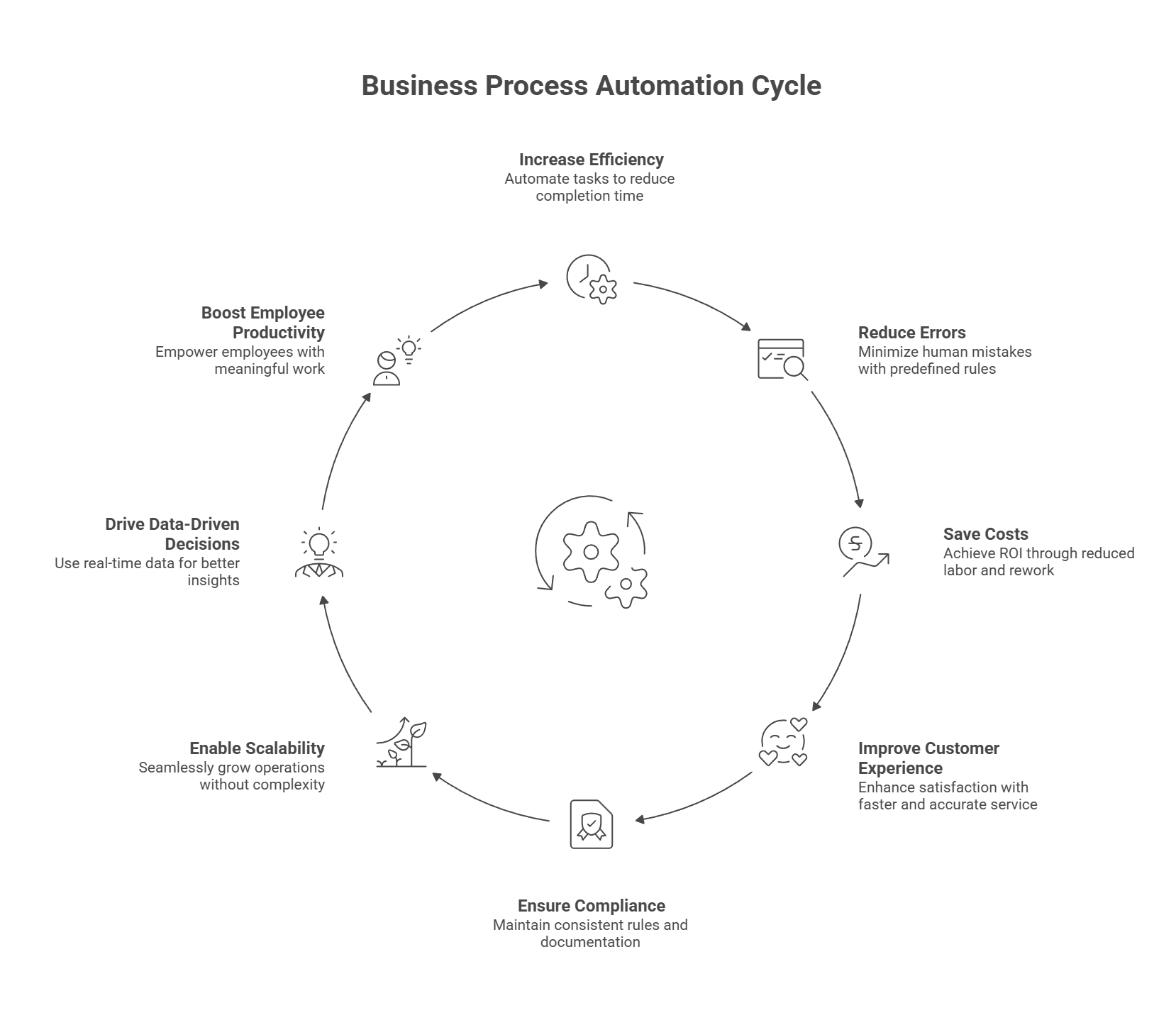

6. Organizations exploring enterprise-wide rollouts often find that business automation software development experience is directly applicable to healthcare imaging workflows, particularly when the goal is automating routing, worklist prioritization, and reporting rather than just the image analysis itself.

7. For cloud-integrated deployments and platforms built on Microsoft Azure Health Data Services or Azure AI, it can make sense to hire Microsoft developers with healthcare cloud experience, since much of the regulatory-grade infrastructure in this space is built on Azure’s FHIR-compliant data layer.

FAQ: Computer Vision in Healthcare Diagnostics

What types of medical conditions can computer vision help diagnose?

Computer vision is currently in clinical use or active validation for detecting lung nodules, breast cancer in mammography, diabetic retinopathy, glaucoma, skin lesions, bone fractures, intracranial hemorrhage, cardiac abnormalities, and various cancers in pathology slides. The range is expanding as more training data becomes available across specialties.

How accurate are computer vision diagnostic tools compared to human clinicians?

Accuracy varies widely by task, dataset, and model design. In narrow, well-defined tasks like diabetic retinopathy screening from fundus photographs, some FDA-cleared systems have demonstrated sensitivity and specificity comparable to specialist ophthalmologists. For more complex diagnostic tasks, AI tools typically perform best when used to support rather than replace clinical judgment.

What data is needed to build a healthcare computer vision model?

You need large volumes of labeled medical images relevant to your target condition, ideally representing demographic diversity. For rare conditions, data augmentation and transfer learning from related datasets can help. Annotation must be done by qualified clinicians, not crowdsourced, as the labeling quality directly determines the model’s clinical reliability.

How long does it take to deploy a computer vision system in a hospital?

Timeline depends heavily on the scope and regulatory pathway. A proof-of-concept on internal data might take three to six months. A production deployment with regulatory clearance, EHR integration, and change management can take two to four years. Organizations that underestimate the non-technical phases consistently run over schedule.

Is computer vision in healthcare cost-effective for smaller hospitals?

Smaller hospitals often lack the internal data and technical infrastructure to build proprietary models. The more practical path is procuring validated third-party solutions designed to integrate with common hospital systems. Cloud-based deployment models have reduced the upfront cost significantly, making the technology accessible outside large academic medical centers.

Conclusion

Computer vision projects in healthcare are not a future possibility. They are a present operational reality in many health systems, and the gap between early adopters and late movers is widening. The technology addresses real diagnostic bottlenecks, consistency problems, and access gaps that have persisted in clinical medicine for decades.

The organizations seeing the most success are not the ones with the flashiest AI ambitions. They are the ones that defined a specific problem, built the data infrastructure to support model development, integrated carefully with existing clinical workflows, and treated clinician adoption as a core project deliverable rather than an afterthought.

The diagnostic challenges in healthcare are significant and largely unsolved at scale. Computer vision is one of the few tools with both the technical capability and the growing evidence base to make a measurable difference.